Get Microsoft DP-203 Exam Dumps

Microsoft Data Engineering on Microsoft Azure Exam Dumps

This Bundle Pack includes Following 3 Formats

Test software

Practice Test

Answers (PDF)

DP-203 Desktop Practice

Test Software

Total Questions : 316

DP-203 Questions & Answers

(PDF)

Total Questions : 316

DP-203 Web Based Self Assessment Practice Test

Following are some DP-203 Exam Questions for Review

You have an Azure subscription that contains an Azure Data Factory data pipeline named Pipeline1, a Log Analytics workspace named LA1, and a storage account named account1.

You need to retain pipeline-run data for 90 days. The solution must meet the following requirements:

* The pipeline-run data must be removed automatically after 90 days.

* Ongoing costs must be minimized.

Which two actions should you perform? Each correct answer presents part of the solution. NOTE: Each correct selection is worth one point.

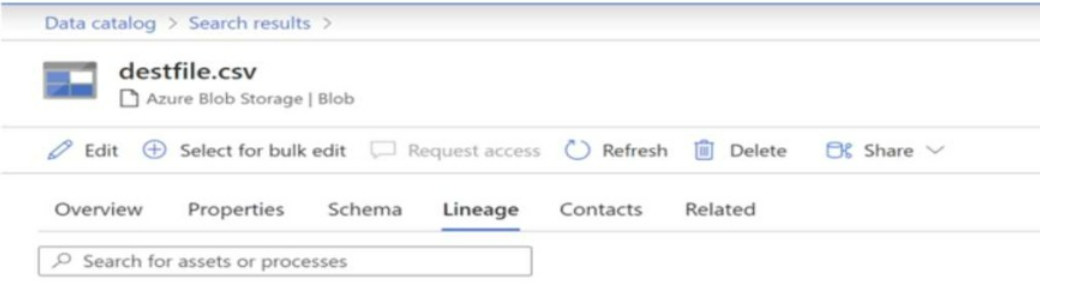

You have a Microsoft Purview account. The Lineage view of a CSV file is shown in the following exhibit.

How is the data for the lineage populated?

You have an Azure Data Factory pipeline named pipeline1 that is invoked by a tumbling window trigger named Trigger1. Trigger1 has a recurrence of 60 minutes.

You need to ensure that pipeline1 will execute only if the previous execution completes successfully.

How should you configure the self-dependency for Trigger1?

You have an Azure subscription that contains an Azure Synapse Analytics dedicated SQL pool named Pool1. Pool1 receives new data once every 24 hours.

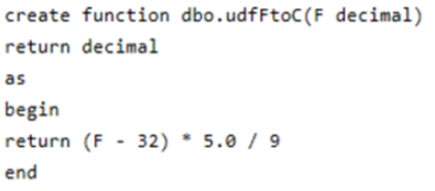

You have the following function.

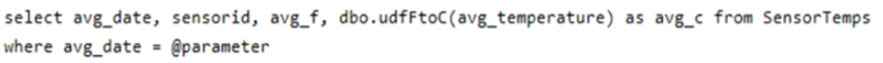

You have the following query.

The query is executed once every 15 minutes and the @parameter value is set to the current date.

You need to minimize the time it takes for the query to return results.

Which two actions should you perform? Each correct answer presents part of the solution.

NOTE: Each correct selection is worth one point.

You have an Azure Synapse Analytics dedicated SQL pool that contains a table named Table1. Table1 contains the following:

One billion rows

A clustered columnstore index

A hash-distributed column named Product Key

A column named Sales Date that is of the date data type and cannot be null

Thirty million rows will be added to Table1 each month.

You need to partition Table1 based on the Sales Date column. The solution must optimize query performance and data loading.

How often should you create a partition?

Unlock All Features of Microsoft DP-203 Dumps Software

Types you want

pass percentage

(Hours: Minutes)

Practice test with

limited questions

Support